The following Blog post is about VMware VSAN or Virtual San. http://www.vmware.com/products/virtual-san

The following post was made with pre GA , Beta content.

All performance numbers are subject to final benchmarking results. Please refer to guidance published at GA

All Content and media is from VMWare, as part of the blogger program.

Please also read vSphere 6 – Clarifying the misinformation http://blogs.vmware.com/vsphere/2015/02/vsphere-6-clarifying-misinformation.html

Here is the published What’s New: VMware Virtual SAN 6.0 http://www.vmware.com/files/pdf/products/vsan/VMware_Virtual_SAN_Whats_New.pdf

Here is the published VMware Virtual SAN 6.0 http://www.vmware.com/files/pdf/products/vsan/VMware_Virtual_SAN_Datasheet.pdf

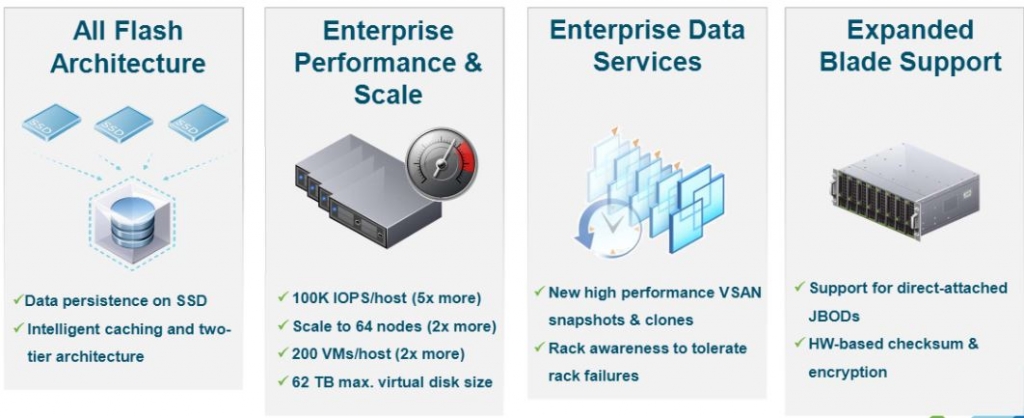

Whats New?

Disk Format

- New On-Disk Format

- New delta-disk type vsanSparse

- Performance Based snapshots and clones

VSAN 5.5 to 6.0

- In-Place modular rolling upgrade

- Seamless In-place Upgrade

- Seamless Upgrade Rollback Supported

- Upgrade performed from RVC CLI

- PowerCLI integration for automation and management

Disk Serviceability Functions

- Ability to manage flash-based and magnetic devices.

- Storage consumption models for policy definition

- Default Storage Policies

- Resync Status dashboard in UI

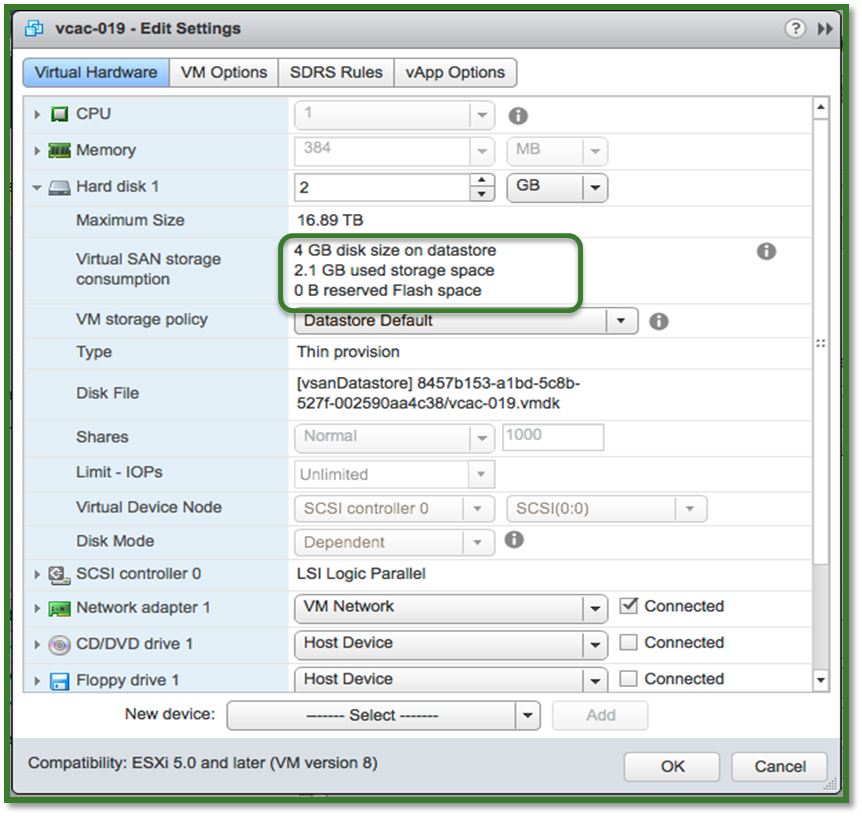

- VM capacity consumption per VMDK

- Disk/Disk group evacuation

VSAN Platform

- New Caching Architecture for all-flash VSAN

- Virtual SAN Health Services

- Proactive Rebalance

- Fault domains support

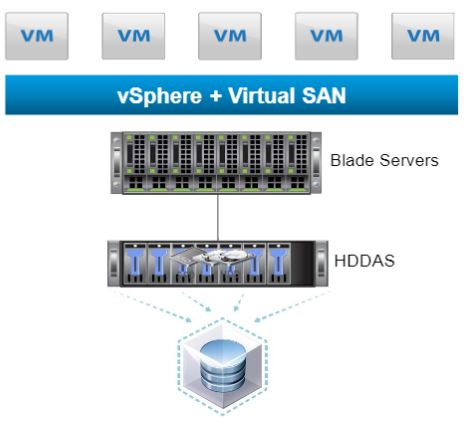

- High Density Storage Systems with Direct Attached Storage

- File Services via 3rd party

- Limited support hardware encryption and checksum

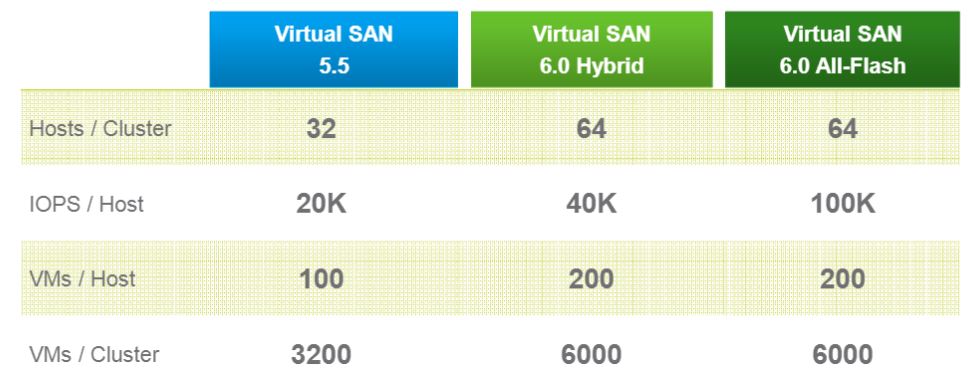

Virtual SAN Performance and Scale Improvements

2x VMs per host

- Larger Consolidation Ratios

- Due to increase of supported components per hosts

- 9000 Components per Host

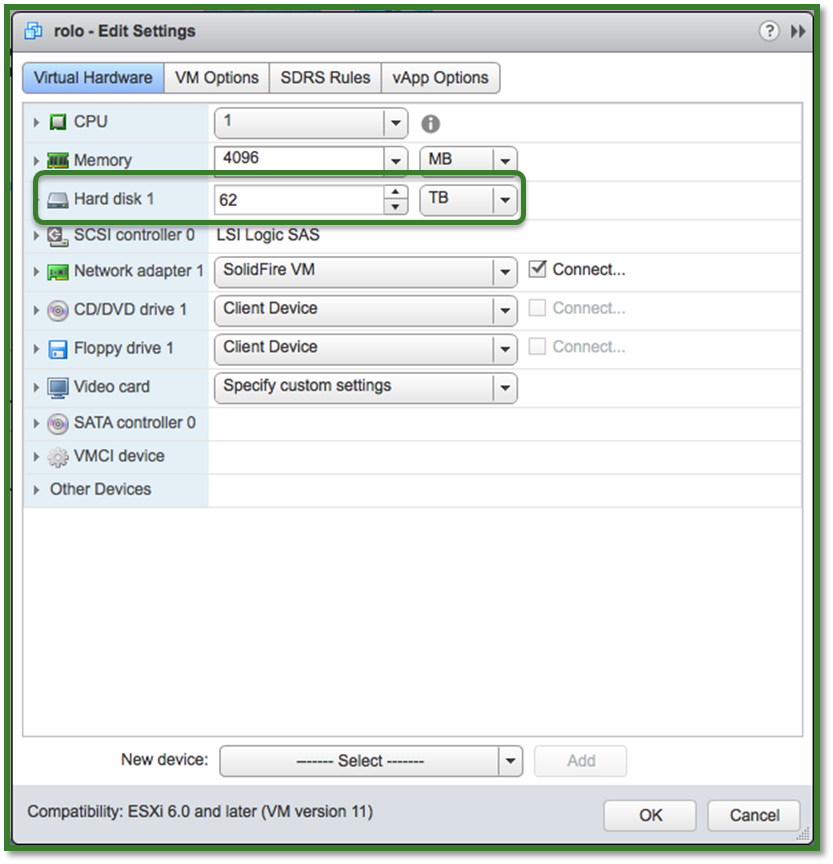

62TB Virtual Disks

- Greater capacity allocations per VMDK

- VMDK >2TB are supported

Snapshots and Clone

- Larger supported capacity of snapshots and clones per VMs

- 32 per Virtual Machine

Host Scalability

- Cluster support raised to match vSphere

- Up to 64 nodes per cluster in vSphere

VSAN can scale up to 64 nodes

Enterprise-Class Scale and Performance

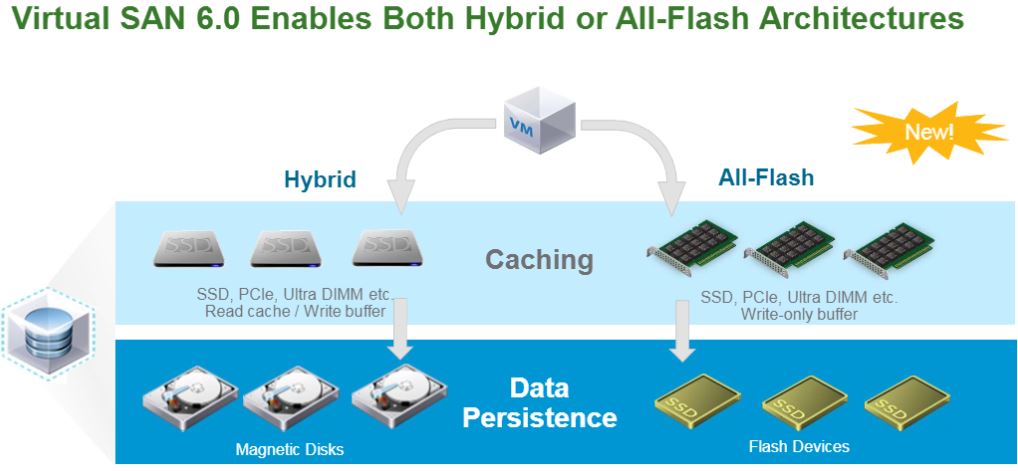

VMware Virtual SAN : All-Flash

Flash-based devices used for caching as well as persistence

Cost-effective all-flash 2-tier model:oCache is 100% write: using write-intensive, higher grade flash-based devices

Persistent storage: can leverage lower cost read-intensive flash-based devices

Very high IOPS: up to 100K(1)IOPS/Host

Consistent performance with sub-millisecond latencies

30K IOPS/Host100K IOPS/Hostpredictable sub-millisecond latency

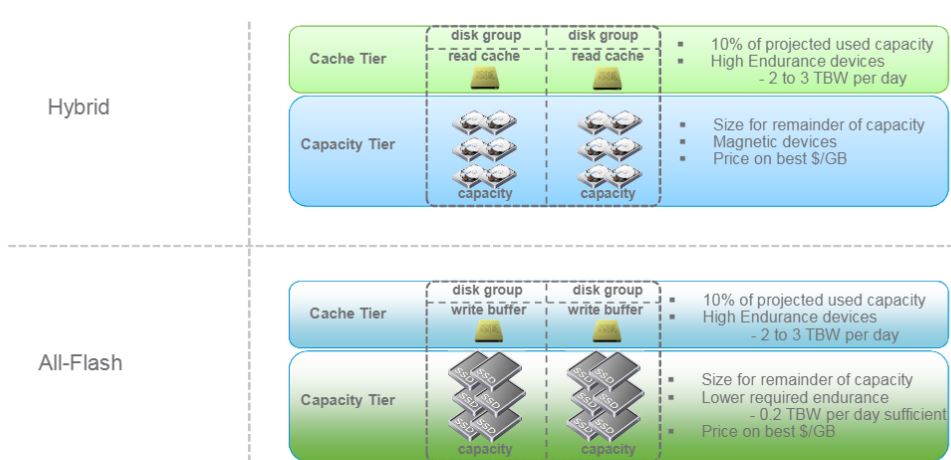

Virtual SAN FlashCaching Architectures

All-FlashCache Tier Sizing

Cache tier should have 10% of the anticipated consumed storage capacity

| Measurement Requirements | Values |

| Projected VM space usage | 20GB |

| Projected number of VMs | 1000 |

| Total projected space consumption per VM | 20GB x 1000 = 20,000 GB = 20 TB |

| Target flash cache capacity percentage | 10% |

| Total flash cache capacity required | 20TB x .10 = 2 TB |

- Cache is entirely write-buffer in all-flash architecture

- Cache devices should be high write endurance models: Choose 2+ TBW/day or 3650+/5 year

- Total cache capacity percentage should be based on use case requirements.

–For general recommendation visit the VMware Compatibility Guide.

–For write-intensive workloads a higher amount should be configured.

–Increase cache size if expecting heavy use of snapshots

New On-Disk Format

- Virtual SAN 6.0 introduces a new on-disk format.

- The new on-disk format enables:

–Higher performance characteristics

–Efficient and scalable high performance snapshots and clones

–Online migration to new (RVC only)

- The object store will continue to mount the volumes from all hosts in a cluster and presents them as a single shared datastore.

- The upgrade to the new on-disk format is optional; the on-disk format for Virtual SAN 5.5 will continue to be supported

Performance Snapshots and Clones

- Virtual SAN 6.0 new on-disk format introduces a new VMDK type

–Virtual SAN 5.5 snapshots were based on vmfsSparse (redo logs)

- vsanSparse based snapshots are expected to deliver performance comparable to native SAN snapshots.

–vsanSparse takes advantage of the new on-disk format writing and extended caching capabilities to deliver efficient performance.

- All disks in a vsanSparse disk-chain need to be vsanSparse (except base disk).

–Cannot create linked clones of a VM with vsanSparse snapshots on a non-vsan datastore.

–If a VM has existing redo log based snapshots, it will continue to get redo log based snapshots until the user consolidates and deletes all current snapshots.

Hardware Requirements

Flash Based Devices In Virtual SAN hybrid ALL read and write operations always go directly to the Flash tier.

Flash based devices serve two purposes in Virtual SAN hybrid architecture

1.Non-volatile Write Buffer (30%)

–Writes are acknowledged when they enter prepare stage on the flash-based devices.

–Reduces latency for writes2.

2. Read Cache (70%)

– Cache hits reduces read latency

– Cache miss – retrieve data from the magnetic devices

Flash Based Devices In Virtual SAN all-flash read and write operations always go directly to the Flash devices.

Flash based devices serve two purposes in Virtual SAN All Flash:

1 .Cache Tier

–High endurance flash devices.

–Listed on VCG2.

2. Capacity Tier

–Low endurance flash devices

–Listed on VCG

Network

•1Gb / 10Gb supported

–10Gb shared with NIOC for QoS will support most environments

–If 1GB then recommend dedicated links for Virtual SAN

–Layer 3network configuration supported in 6.0

•Jumbo Frames will provide nominal performance increase

–Enable for greenfield deployments

–Enable in large deployments to reduce CPU overhead

•Virtual SAN supports both VSS & VDS–NetIOC requires VDS

•Network bandwidth performance has more impact on host evacuation, rebuild times than on workload performance

High Density Direct Attached Storage

–Manage disks in enclosures – helps enable blade environment

–Flash acceleration provided on the server or in thesubsystem

–Data services delivered via the VSAN Data Services and platform capabilities

–Direct attached and disks (flash devices, and magnetic devices) are Supports combination of direct attached disks and high density attached disks (SSDs and HDDs) per disk group.

Users are expected to configure the HDDAS switch such that each disk is only seen by one host.

–VSAN protects against misconfigured HDDASs (a disk is seen by more than 1 host).

–The owner of a disk group can be explicitly changedby unmounting and restamping the disk group from the new owner.

•If a host who own a disk group crashes, manual re-stamping can be done on another host

.–Supported HDDASs will be tightly controlled by the HCL (exact list TBD).•Applies to HDDASs and controllers

FOLLOW the VMware HCL. www.vmware.com/go/virtualsan-hcl

Upgrade

- Virtual SAN 6.0 has a new on disk format for disk groups and exposes a new delta-disk type, so upgrading from 1.0 to 2.0 involves more than upgrading the ESX/VC software.

Upgrades are performed in multiple phases

Phase 1: Fresh deployment or upgrade of to vSphere 6.0

vCenter Server

ESXi Hypervisor

Phase 2: Disk format conversion (DFC)

Reformat disk groups

Object upgrade

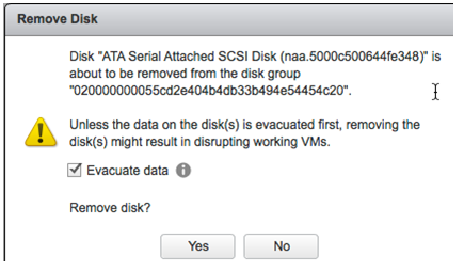

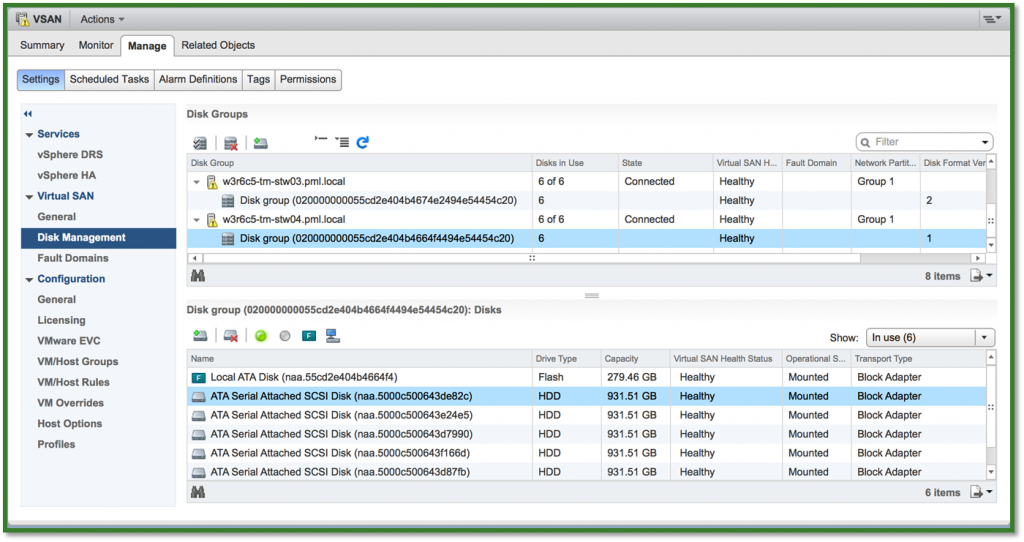

Disk/Disk Group Evacuation

- In Virtual SAN 5.5 in order to remove a disk/disk group without data lost, hosts were placed in maintenance mode with the full data evacuation mode from all disk/disk groups.

- Virtual SAN 6.0 Introduces the support and ability to evacuate data from individual disk/disk groups before removing a disk/disk group from the Virtual SAN.

- Supported in the UI, esxcli and RVC.

Check box in the “Remove disk/disk group” UI screen

Disks Serviceability

Virtual SAN 6.0 introduces a new disk serviceability feature to easily map the location of magnetic disks and flash based devices from the vSphere Web Client.

- Light LED on failures

- When a disk hits a permanent error, it can be challenging to find where that disk sits in the chassis to find and replace it.

- When SSD or MD encounters a permanent error, VSAN automatically turns the disk LED on.

- Turn disk LED on/off

- User might need to locate a disk so VSAN supports manually turning a SSD or MD LED on/off.

- Marking a disk as SSD

- Some SSDs might not be recognized as SSDs by ESX.

- Disks can be tagged/untagged as SSDs

- Marking a disk as local

- Some SSDs/MDs might not be recognized by ESX as local disks.

- Disks can be tagged/untagged as local disks.

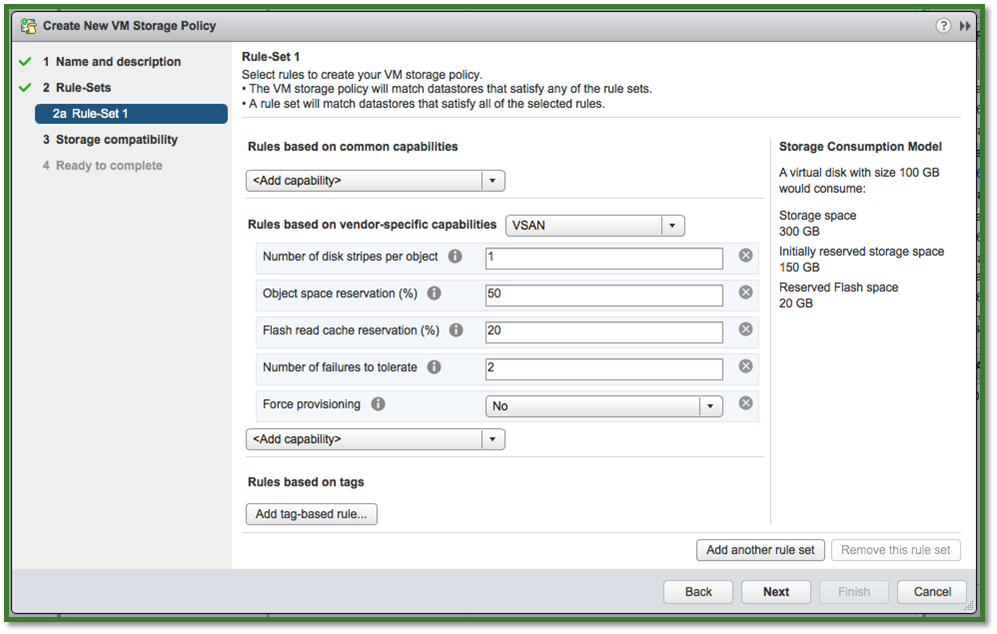

Virtual SAN Usability Improvements

- What-if-APIs (Scenarios)

- Adding functionality to visualize Virtual SAN datastore resource utilization when a VM Storage Policy is created or edited.

–Creating Policies

–Reapplying a Policy

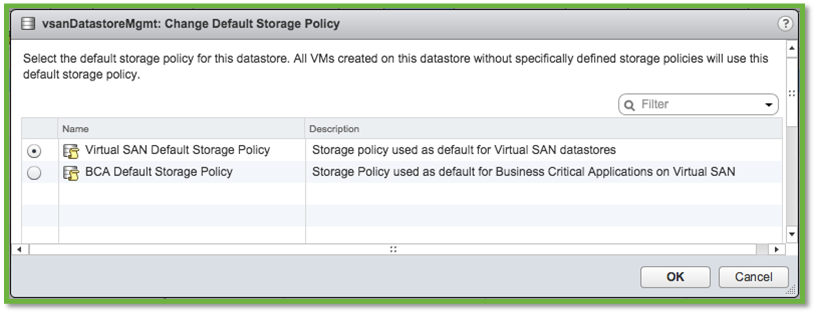

Default Storage Policies

- A Virtual SAN Default Profile is automatically created in SPBM when VSAN is enabled on a cluster.

–Default Profiles are utilized by any VM created without an explicit SPBM profile assigned.

vSphere admins to designate a preferred VM Storage Policy as the preferred default policy for the Virtual SAN cluster

- vCenter can manage multiple vsanDatastores with different sets of requirements.

- Each vsanDatastore can have a different default profile assigned.

Virtual Machine Usability Improvements

- Virtual SAN 6.0 adds functionality to visualize Virtual SAN datastore resource utilization when a VM Storage Policy is created or edited.

- Virtual SAN’s free disk space is raw capacity.

–With replication, actual usable space is lesser.

- New UI shows real usage on

–Flash Devices

–Magnetic Disks

Displayed in the vSphere Web Client and RVC

Virtual Machine >2TB VMDKs

- In VSAN 5.5, the max size of a VMDK was limited to 2TB.

–Max size of a VSAN component is 255GB.

–Max number of stripes per object was 12.

- In VSAN 6.0 the limit has been increased to allow VMDK up to 62TB.

–Objects are still striped at 255GB.

- 62TB limit is the same as VMFS and NFS so VMDK can be

There it is. I tried to lay it out as best I could.

Want to try it? Try the Virtual SAN Hosted Evaluation https://my.vmware.com/web/vmware/evalcenter?p=vsan6-hol

What’s New in Virtual SAN 6

Learn how to deploy, configure, and manage VMware’s latest hypervisor-converged storage solution.

– See more at: https://my.vmware.com/web/vmware/evalcenter?p=vsan6-hol#sthash.OcuO1gXQ.dpuf

Roger Lund